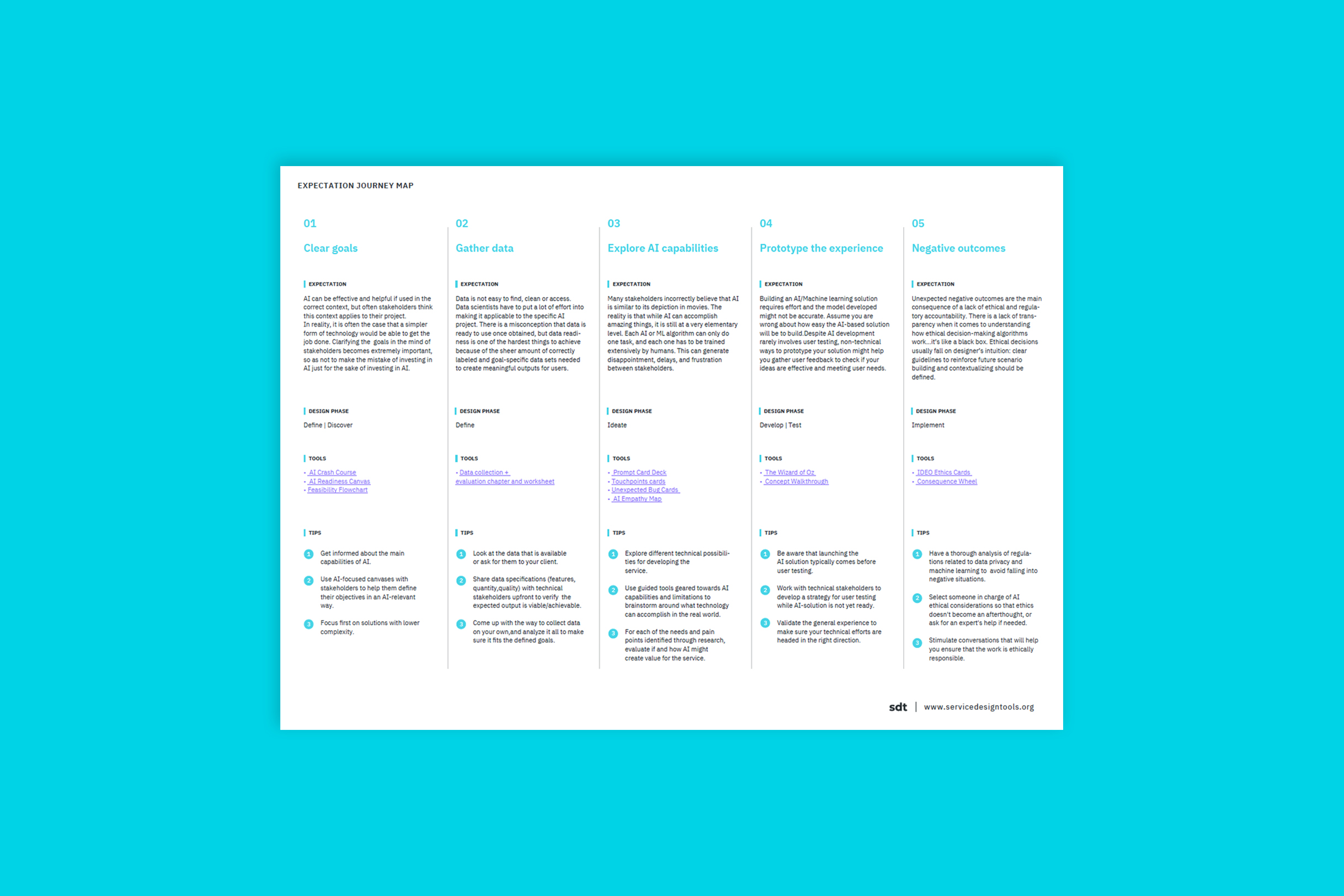

main steps

01

01

Make sure AI is required for your defined goal

There is an expectation that stakeholders hold clear objectives and that those objectives will be solved through AI, but this is not often the case. Having collaborative sessions with stakeholders can help you define the main output of the project in relation with user needs. Clearly defining user needs and goals will help you determine whether AI is relevant or if goals can be met with a simpler technology. It’s also useful to assess the feasibility resource-wise in early stages of the project.

tips

-Being informed about the main capabilities of AI at the current state can be very helpful for designers, for which they can consult AI Crash Course and other resources.

-During the stakeholders meeting various tools like AI Readiness Canvas and Feasibility Flowchart can be used to align the expectations and feasibility of their implementation.

-Focus first on solutions with lower complexity.

-It is useful to ask at this step whether AI fits the value proposition for the designed service and how much value for the user will it provide if it’s successful.

02

02

Prepare to spend time gathering relevant data

Look at the data that is available and how it might help users as early as possible. If you do not have enough data, look for public data sets or pay for access to more data. Share data specifications (features, quantity, quality) upfront. In this way there won’t be any surprises and consequently, lack of trust in what you deliver. Keep in touch with your data scientists and communicate with your stakeholders about how the data sets are being useful/coming along.

tips

-Look at the data that is available or ask for them to your client.

-Share data specifications (features, quantity, quality) with technical stakeholders upfront to verify the expected output is viable/achievable.

-Come up with the way to collect data your own, and analyze it all to make sure it fits the defined goals.

-Keep in mind: even if you have data your technical stakeholders need a lot of time to clean it and make it work, so try not to move forward until they have verified that the expected output is viable/achievable. You can use data collection + evaluation chapter and worksheet to better understand and structure the process with them.

-Important things to ask at this point would be: what information would a human need in order to accomplish the service goal? How will the datasets be obtained? Is there access and what are resources needed for acquiring those datasets?

03

03

Use AI capabilities that actually create value for your service

In general, there is a lack of knowledge about what AI can really achieve and this can generate disappointment, delays, and frustration between stakeholders. For each of the needs, statements and pain points identified through user research, use a card deck to provoke dialogue and explore technical possibilities. You can also use a flowchart to evaluate feasibility and how AI might help improve or innovate your service. To make sure AI is best integrated, brainstorm technical possibilities from the start, focusing on the channels where machine learning can actually add value.

tips

-Explore different technical possibilities for developing the service.

-Use guided tools geared towards AI capabilities and limitations to brainstorm around what technology can accomplish in the real world.

-For each of the needs and pain points identified through research, evaluate if and how AI might create value for the service.

-Organizing a workshop with clients based on AI capabilities and limitations will facilitate technical communication and understanding and lower the possibility of disappointment between all stakeholders. Bring some tools to facilitate this workshop: Prompt Card Deck, Touchpoints cards, Unexpected Bug Cards, AI Empathy Map - all can help to guide the discussion.

-Go on evaluating the feasibility and necessity of the AI use for the service asking whether something simpler, like an algorithm for automation could be used, or if a human can perform this task with less or cheaper effort.

04

04

Switch up your normal process

Building an AI solution is not quick & easy. It requires experts, time, effort with labeling, and, even when you have these, the model might not be accurate. The process of building AI is complex, and the methodology rarely involves user testing before implementation. Prototypes are launched with the expectation of improvement from real user feedback because of the challenge surrounding testing without the coded AI already in place.

tips

-Be aware that launching the AI solution typically comes before user testing.

-Work with technical stakeholders to develop a strategy for user testing while AI-solution is not yet ready.

-Validate the general experience to make sure your technical efforts are headed in the right direction.

-Despite testing usually occurring after implementation, there are a few non-technical ways to prototype your solution. For example, The Wizard of Oz and Concept Walkthrough technique can be used to test if your ideas are effective and meeting user needs.

-Ask yourself whether it is the right moment to spend time/resources to build the AI and whether the solution can bring some value even if it doesn’t fit the initial “smartness” expectations.

05

05

Take control and avoid uncertainty

Negative outcomes from using AI are the result of a lack of planning and visualizing future scenarios. To help designers cope with this situation, take the time to perform a quick workshop early in the process using an available tool regarding ethical considerations of the AI’s outputs. Use an existing format that provides sets of actions and prompts specific to AI considerations to stimulate conversations that will help you ensure that the work is ethically responsible, culturally considerate and humanistic.

tips

-Have a thorough analysis of regulations related to data privacy and machine learning to avoid falling into negative situations.

-Select someone in charge of AI ethical considerations so that ethics doesn’t become an afterthought, or ask for an expert’s help if needed.

-Stimulate conversations that will help you ensure that the work is ethically responsible.

-Start considering ethical aspects early in the process, designating a person in charge of constantly guarding the ethics related to the service. This will help provide accountability. If needed - ask for an expert’s advice to understand the laws and regulations related to data privacy in specific contexts. You can use IDEO Ethics Cards and Consequence Wheel to map your way through the complexity of AI ethics.

-Useful questions to ask would be: Will some jobs that be compromised because of the development of this AI solution? What can be done about it? Does the designed AI solution have some inherited bias possibly harming some categories of people? Is it preserving user’s interests?

references

Verganti, Roberto; Vendraminelli, Luca; and Iansiti, Marco, "Design in the Age of Artificial Intelligence." Harvard Business School Working Paper, No. 20-091, February 2020.Frick, Nicholas R. J.; Brünker, Felix; Ross, Björn; and Stieglitz, Stefan, "Towards Successful Collaboration: Design Guidelines for AI-based Services enriching Information Systems in Organisations" (2019). ACIS 2019 Proceedings. 38.

Gasparini, Andrea; Mohammed, Ahmed; Oropallo, Gabriele, “Service design for artificial intelligence” (2018). ServDes 2018 Proceedings. 1064

Jylkäs, Titta; Äijälä, Mikko Henrikki; Vuorikari, Tytti Marianne; and Rajab, Vésaal “AI Assistants as Non-human Actors in Service Design” (2018). Proceedings of the DMI: Academic Design Management Conference. 1436